The emergence of artificial intelligence has generated an ambivalent emotional response: fascination with its capabilities and, at the same time, a diffuse unease about the future. This unease is not new. Every major technological transformation — the Industrial Revolution, electrification, digitalisation — has altered social, professional and psychological structures. What is different now is the speed and depth with which change is perceived.

The current discomfort does not stem solely from the technology itself, but from what it represents: a sense of loss of control, uncertainty about one’s own value, and the implicit threat to traditional roles. When the future becomes difficult to predict, the human mind tends to respond with anxiety. In some cases, this anxiety takes more structured forms, such as repetitive thoughts and the need for control characteristic of obsessive-compulsive disorder.

From a deeper psychological perspective, artificial intelligence can be seen as a “mirror” that amplifies conflicts that already exist. It does not introduce fear; it reveals it. The question therefore shifts from “what will AI do to us?” to “which parts of ourselves have we been avoiding until now?”

Throughout history, every technological advance has forced society to reorganise itself. It is not merely a matter of replacing jobs, but of redefining the meaning of time, effort and personal value. Automation, far from being only a threat, opens up the possibility of something that has historically been scarce: free time.

This point is crucial. More free time does not automatically lead to well-being. In fact, it can generate more anxiety if one does not know how to inhabit it. As external pressure decreases, internal conflicts become more visible. In individuals with obsessive tendencies, this may translate into increased rumination: repetitive thoughts, persistent doubts, and a need for certainty.

At this point, a new demand emerges, but also an opportunity: the need to be more honest with oneself.

Radical honesty involves recognising what one truly feels, what one avoids, and what one fears. It is not a comfortable exercise. It requires abandoning defensive narratives — “everything is under control”, “this does not affect me” — and admitting vulnerability. However, this honesty has a stabilising effect: it reduces the internal tension that fuels anxiety.

Assertiveness

Closely linked to this is another essential element: assertiveness.

Being assertive is not only about communicating effectively with others, but about establishing a clearer relationship with oneself. Saying “this affects me”, “I do not accept this”, “I need this”. In a context where external structures are shifting, internal clarity becomes an anchor. For those with obsessive tendencies, this is particularly relevant: the more diffuse the sense of identity, the greater the space for pathological doubt.

Community

The second major axis is community.

If technology reduces the need for functional interaction (work, administration, production), it increases the importance of meaningful interaction. Relationships cease to be a means and become an end. Without community, the individual is left exposed to their own mind without counterbalance. With community, thoughts are relativised, challenged, and humanised.

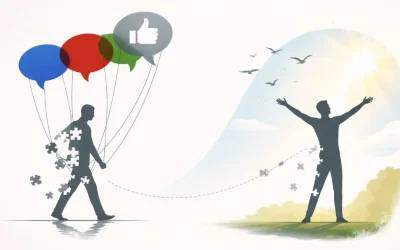

In this sense, AI can act as a catalyst for a deeper transformation: a shift from a society centred on productivity to one centred on relationship and awareness.

With regard to obsessive-compulsive disorder, this shift has important implications. OCD feeds on the illusion of absolute control and intolerance of uncertainty. Artificial intelligence, by making it clear that the world is increasingly complex and unpredictable, may initially intensify this anxiety. However, it can also push towards a more mature adaptation: accepting uncertainty as a structural condition of life.

When this acceptance occurs, the energy previously invested in controlling the uncontrollable can be redirected towards understanding one’s own fears. Not eliminating them, but seeing them more clearly. And in that clarity, they lose part of their power.

The key, therefore, is not to resist technological change, but to accompany it with an equivalent psychological shift.

Artificial intelligence does not determine the human destiny. It places it under tension. It exposes it. It forces it to reorganise. And in that process, it offers something that is rarely mentioned: the possibility of living with greater awareness, stronger connection, and less self-deception.

The challenge is not technological. It is profoundly human.

Damián Ruiz

Barcelona, April 2026

www.ipitia.com